Guide to Role of Semiconductors in AI: Powering Modern Artificial Intelligence Systems

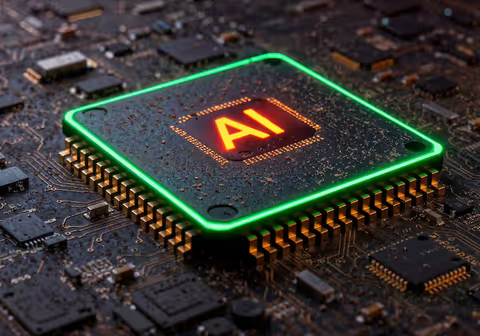

Artificial Intelligence (AI) is transforming industries such as healthcare, finance, and transportation. Behind this rapid progress lies a critical technology: semiconductors. The role of semiconductors in AI is fundamental because they act as the building blocks of computing systems that process large amounts of data.

From smartphones to data centers, semiconductors enable machines to learn, analyze, and make decisions. Understanding their role helps explain how AI systems operate efficiently and continue to evolve.

Overview / Basics of the Topic

What Are Semiconductors?

Semiconductors are materials that have electrical conductivity between conductors and insulators. Common examples include silicon, which is widely used in electronic devices.

They are used to create components like:

- Microprocessors

- Memory chips

- Graphics processing units (GPUs)

- Integrated circuits

What Is AI Processing?

AI processing involves handling large datasets, running algorithms, and performing calculations at high speed. These operations require powerful and efficient hardware, which semiconductors provide.

Importance or Benefits

The role of semiconductors in AI is crucial for several reasons:

1. High-Speed Computation

AI models require billions of calculations per second. Semiconductor-based chips enable fast processing.

2. Energy Efficiency

Modern AI systems consume significant energy. Advanced semiconductor designs help reduce power consumption while maintaining performance.

3. Scalability

Semiconductors allow AI systems to scale from small devices to large cloud-based infrastructures.

4. Real-Time Processing

Applications like voice assistants and autonomous systems depend on real-time data processing, which semiconductors support.

Types / Features / Key Aspects

Key Semiconductor Components in AI

Different semiconductor components play specific roles in AI systems:

1. Central Processing Units (CPUs)

- Handle general computing tasks

- Manage system operations

2. Graphics Processing Units (GPUs)

- Designed for parallel processing

- Ideal for training AI models

3. Tensor Processing Units (TPUs)

- Specialized for AI workloads

- Optimized for machine learning tasks

4. Neural Processing Units (NPUs)

- Built specifically for neural networks

- Used in smartphones and edge devices

Comparison of AI Semiconductor Types

| Component | Main Function | Best Use Case |

|---|---|---|

| CPU | General processing | System control |

| GPU | Parallel computation | AI training |

| TPU | AI-specific tasks | Deep learning |

| NPU | Neural network acceleration | Edge AI |

How It Works / Process

Step-by-Step Role of Semiconductors in AI

-

Data Input

- AI systems receive data such as images, text, or audio.

-

Processing via Chips

- Semiconductor chips process this data using algorithms.

-

Model Training

- GPUs or TPUs perform complex calculations to train AI models.

-

Inference Stage

- NPUs or CPUs use trained models to make predictions.

-

Output Generation

- Results are delivered, such as recommendations or decisions.

Key Concept: Parallel Processing

Latest Trends or Updates (Recent Focus)

The role of semiconductors in AI continues to evolve with new developments:

1. AI-Specific Chip Design

Companies are designing chips specifically for AI workloads, improving efficiency and reducing latency.

2. Edge AI Growth

AI is moving from cloud systems to local devices like smartphones and IoT devices. This requires compact and efficient semiconductor chips.

3. Advanced Manufacturing Nodes

Smaller semiconductor nodes (like 5nm and below) improve performance and energy efficiency.

4. Integration of AI in Everyday Devices

From smart assistants to wearable technology, semiconductors are enabling AI in daily applications.

5. Increased Demand for Data Center Chips

Cloud computing platforms rely heavily on semiconductor hardware to run large AI models.

Common Mistakes or Considerations

When understanding or working with AI and semiconductors, some common points should be considered:

1. Assuming All Chips Are the Same

Different AI tasks require different types of semiconductor chips.

2. Ignoring Power Consumption

AI systems can consume significant energy. Efficient chip design is essential.

3. Overlooking Scalability

Hardware must support future growth in AI workloads.

4. Complexity of AI Hardware

AI hardware systems can be complex and require proper integration.

5. Cost vs Performance Trade-Off

Higher performance chips may require more resources, so balance is important.

Conclusion

The role of semiconductors in AI is central to the advancement of modern technology. These tiny components enable machines to process data, learn patterns, and make decisions efficiently.

From CPUs and GPUs to specialized AI chips like TPUs and NPUs, semiconductors form the foundation of AI systems. As AI continues to grow, innovations in semiconductor technology will play a key role in shaping the future of intelligent systems.